Rushing into AI and SaaS contracts can cost you millions. Hidden risks - like losing ownership of your data, liability for vendor mistakes, or conflicts with client agreements - are buried in the fine print. Here's what you need to know upfront:

- Vendor data use: Many AI tools use your data for training, potentially benefiting competitors or violating privacy laws.

- Intellectual property (IP): Without clear terms, you may not fully own AI-generated outputs, creating conflicts with client promises.

- Liability caps: Vendors often limit their responsibility to a fraction of potential damages, leaving you exposed to major financial risks.

- Subcontractor risks: Vendors may rely on undisclosed third parties, increasing compliance and security vulnerabilities.

- Internal governance: Misaligned policies and contracts can lead to breaches, fines, and reputational harm.

Key takeaway: Always review AI and SaaS contracts thoroughly. Negotiate terms to protect your data, secure stronger IP rights, and rebalance liability risks. Establish internal policies to ensure compliance and safeguard your business.

Want the full breakdown? Learn how to spot red flags in contracts, negotiate safer terms, and align your internal practices to avoid costly mistakes.

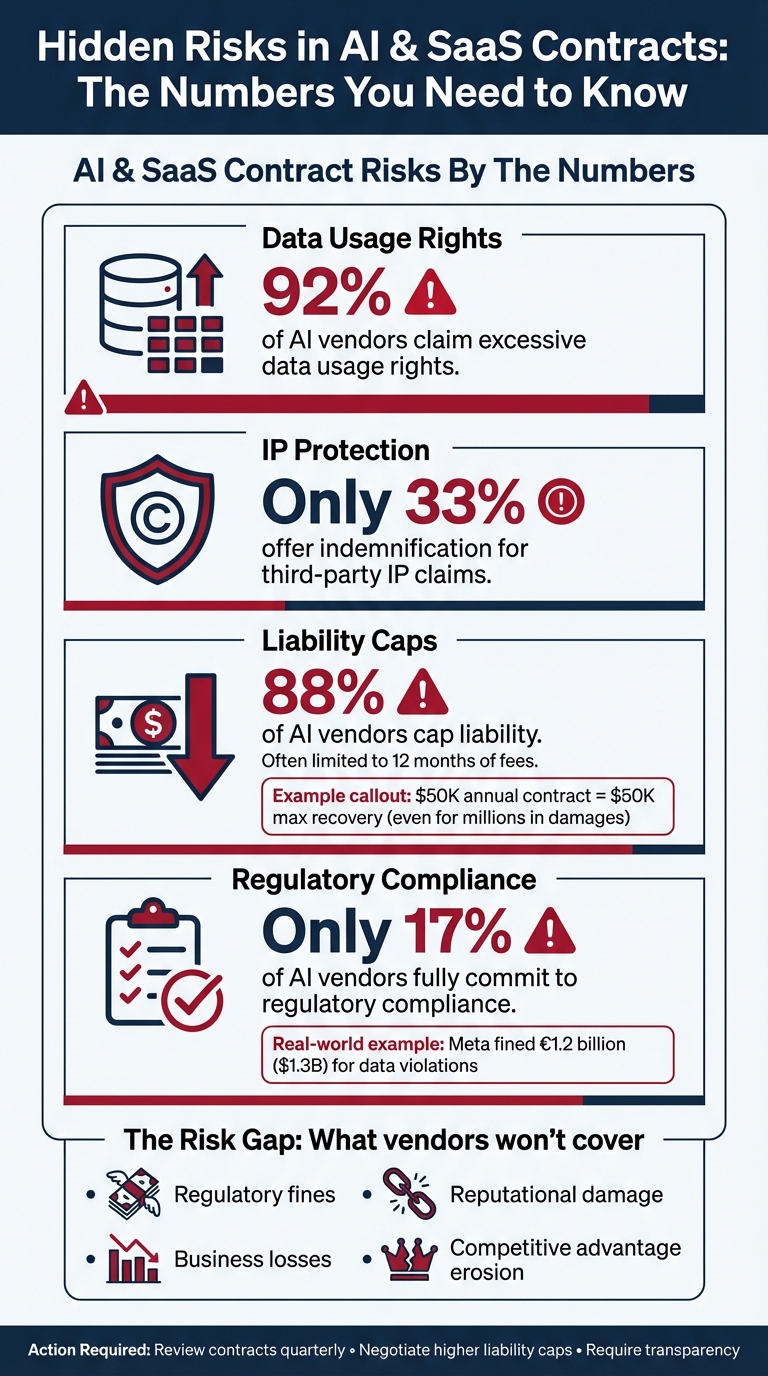

AI and SaaS Contract Risks: Key Statistics Every Business Should Know

Data Ownership and Vendor Training Rights

The Hidden Cost of Data Sharing

Every time you use proprietary data in an AI tool, you could be handing over a competitive advantage to the vendor. Many vendors use inputs like strategic prompts or customer insights to train their models, potentially applying those insights to benefit other clients - including your competitors [3]. This means that each API call could chip away at your competitive edge. To guard against this, it's essential to have clear and strict contractual prohibitions in place.

Without proper isolation of the AI model, sensitive information like trade secrets or proprietary code could reappear in outputs provided to other users [1][3]. On top of that, using customer data for training purposes might violate privacy laws such as the California Consumer Privacy Act (CCPA), where it could be interpreted as "selling" or "sharing" personal information. This could lead to fines or lawsuits [5]. Even worse, submitting sensitive legal information without robust safeguards might unintentionally waive attorney-client privilege [3].

Protecting Your Proprietary Data

To address these risks, enforce strong contractual protections for your data. Insist on a clear clause that explicitly prohibits the vendor from using your data for training purposes. For example: "Vendor will not train its AI and related features using Customer Data" [5]. Additionally, ensure the vendor has agreements with their sub-providers (like OpenAI or Google) that also prohibit the use of your data for training [5]. Be aware that many vendors include an opt-out clause buried in the fine print - if you don’t actively opt out, your data might be used for training by default [6].

"If you're subscribing to a service that uses AI, make sure you don't inadvertently give the vendor rights in the contract to use your input for training. At least not without your written consent." – Jana Gouchev, Managing Attorney, Gouchev Law [1]

To further safeguard your data, explicitly define ownership in your contracts. Make it clear that you retain ownership of all input prompts and that the vendor disclaims ownership of any generated outputs [6][8]. Before finalizing agreements, compare the vendor's licensing terms with your existing client contracts. For instance, if you've promised clients full intellectual property ownership but the vendor only offers a non-transferable license, you could end up breaching your own agreements [1]. Lastly, ensure that the vendor's technical infrastructure supports these contractual promises. This means verifying that they use measures like data encryption and isolation - legal language alone won’t protect your data if the technology isn’t secure [1][5].

Intellectual Property (IP) Ownership and Licensing Risks

When Deliverables Aren't Fully Yours

IP clauses in vendor contracts can present serious challenges, often undermining what seems like straightforward promises of full ownership. These clauses can create conflicts with your client agreements, leaving your business vulnerable to significant liability.

A common scenario arises when AI-generated content you've paid for doesn't truly belong to you. This mismatch can lead to delivering work that doesn't align with your client's expectations. Many standard AI vendor agreements allow vendors to reuse or re-license outputs, which means your competitors might end up with content that's nearly identical to yours. This erodes your competitive edge. Adding to the complexity, the U.S. Copyright Office usually declines to register works created solely by AI unless there’s substantial human authorship involved. Without clear ownership rights and documented human input, AI-generated content could fall into the public domain.

"If your client agreements and AI vendor contracts can't coexist, you have two options: renegotiate your AI vendor terms for better IP rights or avoid using AI tools for clients where conflicts exist." – Jana Gouchev, Managing Attorney, Gouchev Law [1]

Negotiating IP Ownership in Contracts

Before committing to any AI vendor contract, it’s crucial to ensure their licensing terms align with the promises you’ve made to your clients. For example, if you’ve guaranteed clients full ownership of deliverables but the vendor only offers a non-transferable license, you could unintentionally breach your agreements.

To protect your business, aim to include a "work made for hire" clause or negotiate an explicit assignment of all IP rights to your company. If full ownership isn’t an option, push for a transferable license that allows you to commercialize and redistribute the outputs.

Another way to strengthen your position is by adopting a human-in-the-loop approach. This involves having humans regularly review and modify AI-generated outputs, which can bolster your claim to copyright protection. Documenting the extent of human involvement is not only helpful for copyright registration but also provides essential evidence in case of infringement disputes.

Lastly, address liability risks in your contracts. Many AI vendor agreements cap liability for IP infringement at just one month’s fees, leaving your business exposed to potentially significant financial losses. Negotiating higher liability caps specific to IP risks is a necessary step to ensure you’re adequately protected.

Liability Caps and Data Privacy Risks

The Hidden Financial Risks of Liability Caps

Most SaaS and AI vendors limit their liability to the equivalent of 12 months of contract fees[10]. This means if you're paying $50,000 a year for a service, the most you could recover after a major security breach would be that same $50,000 - even if the actual damages run into the millions.

Take Meta, for instance. In 2023, EU regulators fined the company approximately €1.2 billion (about $1.3 billion) for data transfer violations. This case underscores how regulatory penalties can far exceed the value of a vendor contract[9]. Adding to the challenge, many vendor agreements exclude "indirect damages" like business losses, regulatory fines, or reputational damage[10].

Vendors often shield themselves from significant financial exposure, leaving you to shoulder the risk. As Bennett Jones aptly puts it:

"This one-sided allocation of risk means that even when harm originates from the vendor's technology... the organization bears the full burden of defense and liability, with little contractual recourse."[3]

This imbalance becomes even more concerning when you consider its overlap with data privacy obligations.

Ensuring Strong Data Privacy Protections

Vendor contracts frequently lack transparency about how customer data is stored or used. This lack of detail can leave your business vulnerable, especially if sensitive data is leveraged to improve the vendor’s services - potentially creating indirect competitive risks[3].

To address these vulnerabilities, consider negotiating "super-caps" for high-risk scenarios. For example, propose liability limits of three times your annual fees or set a fixed cap (e.g., $2 million) specifically for data breaches and privacy violations[7][10]. Additionally, ensure the contract explicitly excludes caps for liabilities tied to gross negligence, willful misconduct, or fraud.

To further protect your organization, require the vendor to adhere to SOC 2 or ISO standards with annual audits. Also, insist that data be returned in a usable format and fully erased within 30 days of contract termination, with certification provided by an authorized officer[4].

These steps can help you regain some balance in the risk equation while safeguarding your data.

Vendor Transparency and Subcontractor Risks

Third-Party Risks in AI and SaaS Contracts

When working with an AI vendor, there’s often a hidden layer of subcontractors involved - ones you may not even know about. This creates a disconnect between what the vendor promises and what’s actually delivered.

History shows how costly this can be. Companies have faced multimillion-dollar losses, hefty legal bills, and mandatory breach notifications impacting thousands of individuals when undisclosed third-party processing violated compliance standards. These situations highlight the dangers of not knowing who’s really handling your data.

The issue goes beyond a single bad actor. Many AI tools are built on a foundation of third-party APIs, datasets, and models that vendors may not fully disclose - or even understand themselves. This lack of visibility makes it nearly impossible for you to ensure compliance with transparency or ethical standards required by your clients. For instance, some undisclosed subcontractors might store or process data in jurisdictions that conflict with your data residency requirements. This could leave you exposed to regulatory risks under laws like the GDPR or the EU AI Act [3][11].

Building Transparency Into Vendor Agreements

To protect yourself, start by comparing your client’s transparency requirements with your AI vendor’s terms before signing anything. If your client insists on knowing about subcontractors, make sure you can disclose the use of the AI tool without breaching the vendor’s confidentiality clauses [1].

Negotiate clauses that require prior written approval for adding new subcontractors or third-party APIs, especially for sensitive projects [11]. Push for the right to audit the vendor’s AI supply chain and insist on receiving technical documentation that explains model logic and the origins of training data [11].

"Require subcontractors to meet the same AI standards as primary vendors. Include flow-down provisions for AI requirements. Maintain visibility into AI supply chain dependencies." – Angela P. Doughty, Attorney, Ward and Smith, P.A. [11]

You may also want to create a standalone AI Addendum to address specific risks, such as how subcontractors handle data or how model updates are managed - issues that standard Master Subscription Agreements often overlook [1]. Additionally, your contracts should specify that any AI-generated outputs from subcontractors must go through human review to ensure they meet client quality and accuracy expectations [1].

sbb-itb-9cd970b

Internal Governance and Policy Alignment

Bridging the Gap Between Contracts and Execution

Even the best-written contracts can fall apart if internal practices don’t align with them. As mentioned earlier, discrepancies between vendor agreements and internal operations can lead to serious financial and regulatory troubles. For instance, if a client contract guarantees full intellectual property ownership but the AI vendor only offers a license, using the tool could immediately put the company in breach of its agreement [1].

"Internal governance must match your contracts. Even airtight terms can't protect you from employee misuse without clear policies." – Gouchev Law [1]

While negotiating contracts carefully reduces external risks, ensuring internal policies align with those agreements is equally important. To avoid breaches, businesses must implement clear internal guidelines that reflect the terms of their contracts.

Creating Governance Frameworks for AI and SaaS

Misalignment between contracts and internal practices can expose businesses to unnecessary risks, but a well-structured governance framework can bridge this gap. Start by developing internal policies for AI and SaaS usage before entering into agreements with vendors. These policies should ensure that your team’s practices match your contractual obligations. Early on, compare your existing client commitments with new vendor terms to identify potential conflicts. Clearly outline which types of data - such as proprietary code, client personal information, or regulated health data - should never be shared with AI tools.

To make compliance easier, consider implementing a simple data classification system. For example:

- Green: Public content, safe for unrestricted use.

- Yellow: Internal documents, requiring caution.

- Orange: Customer personal information, needing strict controls.

- Red: Regulated data, completely restricted from AI tools.

This kind of visual system helps employees quickly understand what’s allowed and reduces the likelihood of accidental breaches. Additionally, require human oversight of AI outputs to catch errors or biases that contractual terms alone can’t address [2].

Keep a detailed audit trail of AI usage and API activity to demonstrate compliance when needed. Review both your AI-related terms and internal policies every quarter to stay ahead of rapid technological advancements and shifting regional regulations [9]. Regular updates to your governance framework ensure your business remains prepared to navigate the evolving challenges of AI and SaaS.

Conclusion: Protecting Your Business Through Smarter Deal-Making

Key Takeaways

A deal only protects you if you address risks before signing. Consider this: 92% of AI vendors claim excessive data usage rights, while just 33% offer indemnification for third-party IP claims [12]. These numbers aren’t just statistics - they’re red flags highlighting the terms many companies accept without a second thought.

To safeguard your business, start by adding an AI Addendum to your contracts to address specific risks tied to training data and ownership. Dive into each vendor’s regulatory history and the origins of their training data. Compare your AI vendor agreements with existing client contracts to identify potential conflicts that could lead to breaches. And don’t overlook negotiating higher liability limits for critical risks like IP infringement and data breaches, especially since 88% of AI vendors cap liability, often leaving you to shoulder the financial fallout [12].

"The companies that succeed with AI use won't be the fastest, they'll be the most prepared." – Jana Gouchev, Managing Attorney, Gouchev Law [1]

Next Steps for Smarter SaaS and AI Deals

The insights above highlight the need for constant vigilance in managing contracts. Start by reviewing your agreements quarterly. With only 17% of AI vendors fully committing to regulatory compliance [12], relying on standard terms is a gamble. Build internal governance frameworks before entering new agreements, ensuring your team’s practices align with contractual obligations. Require full transparency regarding subcontractors and data sources, and insist on termination clauses that ensure secure data disposal.

New laws like the Colorado Artificial Intelligence Act and the EU AI Act demand continuous updates [1][12][7]. Your contracts must evolve to meet these requirements. Regular reviews - both of vendor agreements and internal policies - help you stay ahead. Remember the costly lessons learned by that healthcare firm; taking precautions now can save you from similar losses later. The goal isn’t to shy away from AI and SaaS tools - it’s to use them wisely. Protect what matters most: your data, intellectual property, and reputation. By aligning your internal policies with contract terms today, you’re preparing your business for tomorrow’s innovations.

Technology Contracts: Legal Essentials, Pitfalls, and AI Risks | Myerson Solicitors Webinar

FAQs

What risks should I watch out for in AI and SaaS contracts?

When dealing with AI and SaaS contracts, it’s crucial to be aware of potential risks that could impact your business. One major concern is limited liability clauses. These clauses often cap the vendor's responsibility for damages, which means your company could be left bearing significant financial or operational burdens if something goes wrong.

Another area to scrutinize is indemnification restrictions. These limitations can leave you vulnerable, especially in situations like third-party intellectual property disputes, where you might expect stronger protections.

Pay close attention to clauses that grant vendors broad rights to your data. Such terms might allow them to reuse your inputs or outputs, raising serious concerns about data privacy and ownership. Also, be wary of agreements that let vendors unilaterally change terms, as this can lead to unpredictable or unfair practices down the line.

To safeguard your business, take the time to thoroughly review these clauses. Negotiating terms that protect your interests and ensure control over your data is not just advisable - it’s essential.

How can I make sure my data isn’t used by vendors to train their models?

To keep your data safe from being used for vendor training, start by thoroughly examining the terms outlined in your contract. Pay close attention to clauses about data handling, particularly those that mention whether your information can be reused for training or improving models. Many standard agreements may grant vendors the right to use customer data for these purposes unless there are explicit restrictions in place.

To protect your information, consider negotiating contract terms that clearly limit data usage. Focus on adding provisions that address ownership rights, restrictions on training use, and requirements for data deletion. Including a specific AI addendum in your agreement can further ensure that your data remains private and fully under your control. It's also crucial to confirm that the vendor’s Terms of Service align with your expectations and do not allow data reuse without your explicit consent.

By taking these precautions, you can better safeguard your sensitive information and minimize the risk of it being used in unintended ways.

How can I protect my intellectual property when using AI-generated content?

To protect your intellectual property (IP) in AI-generated content, start by ensuring that all vendor agreements and licensing contracts explicitly outline ownership rights. This clarity ensures your company maintains control over the IP you create or input into AI systems. Be especially mindful of clauses related to data use and training rights, as these might permit vendors to reuse or repurpose your proprietary data without your direct approval.

Negotiating confidentiality and IP ownership terms is equally critical - both for the AI inputs you provide and the outputs generated. This helps safeguard against unintended licensing or losing control over your rights. Internally, establish clear policies for handling AI-generated content and ensure your team adheres to best practices to prevent misuse. In short, thorough contract reviews combined with strong internal governance are essential to securing your IP in this rapidly evolving landscape.